Trillions Spent on ‘Climate Change’ Based on Faulty Temperature Data, Climate Experts Say

By Katie Spence

To preserve a “livable planet,” the Earth can’t warm more than 1.5 degrees Celsius above pre-industrial levels, the United Nations warns.

Failure to maintain that level could lead to several catastrophes, including increased droughts and weather-related disasters, more heat-related illnesses and deaths, and less food and more poverty, according to NASA.

To avert the looming tribulations and limit global temperature increases, 194 member states and the European Union in 2016 signed the U.N. Paris Agreement, a legally binding international treaty with a goal to “substantially reduce global greenhouse gas emissions.”

After the agreement, global spending on climate-related projects increased exponentially.

In 2021 and 2022, the world’s taxpayers spent, on average, $1.3 trillion on such projects each year, according to the nonprofit advisory group Climate Policy Initiative.

That’s more than double the spending rate in 2019 and 2020, which came in at $653 billion per year, and it’s significantly up from the $364 billion per year in 2011 and 2012, the report found.

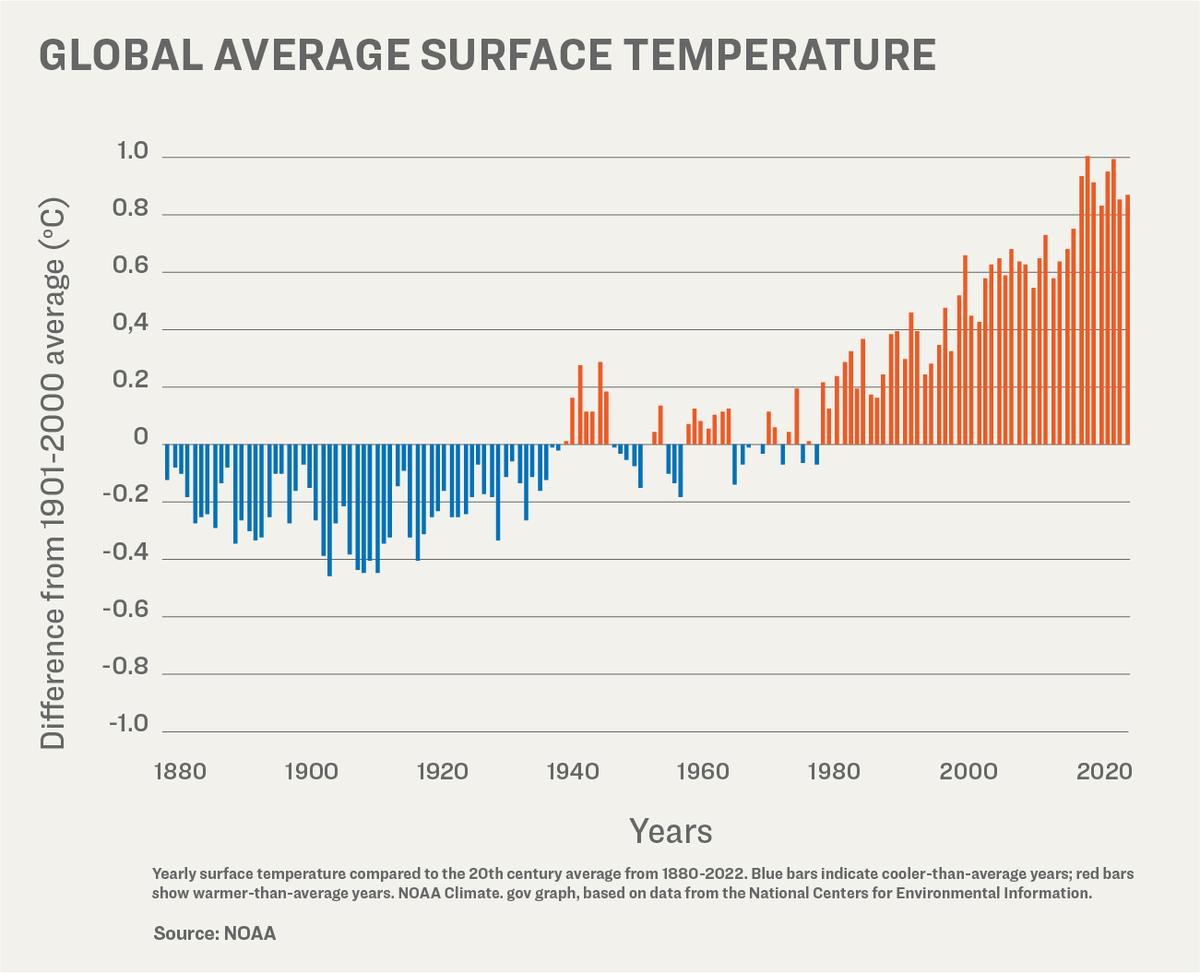

Despite the money pouring in, the National Oceanic and Atmospheric Administration (NOAA) reported that 2023 was the hottest year on record.

NOAA’s climate monitoring stations found that the Earth’s average land and ocean surface temperature in 2023 was 1.35 degrees Celsius above the pre-industrial average.

“Not only was 2023 the warmest year in NOAA’s 174-year climate record—it was the warmest by far,” said Sarah Kapnick, NOAA’s chief scientist.

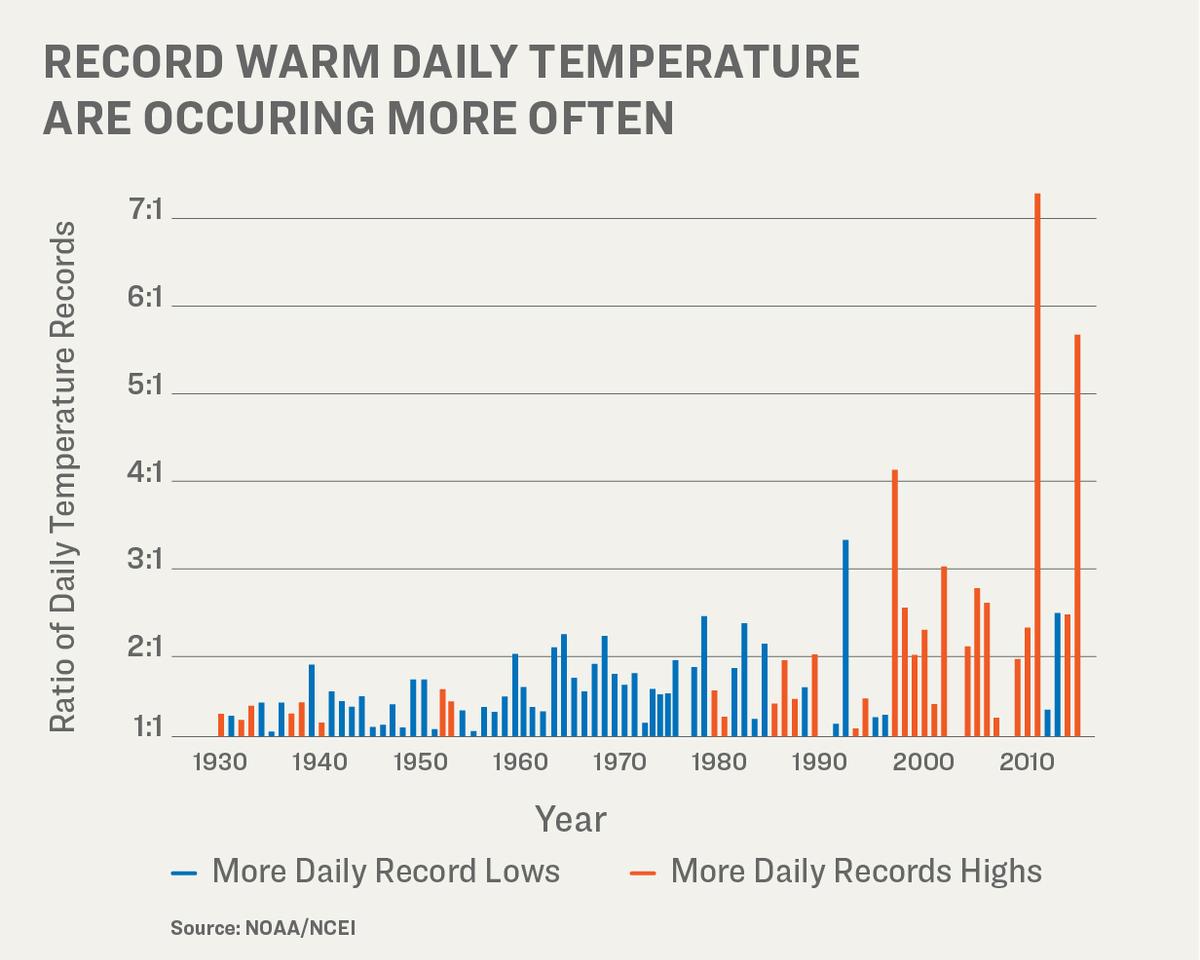

“A warming planet means we need to be prepared for the impacts of climate change that are happening here and now, like extreme weather events that become both more frequent and severe.”

But a growing chorus of climate scientists are saying the temperature readings are faulty and that the trillions of dollars pouring in are based on a problem that doesn’t exist.

More than 90 percent of NOAA’s temperature monitoring stations have a heat bias, according to Anthony Watts, a meteorologist, senior fellow for environment and climate at The Heartland Institute, author of climate website Watts Up With That, and director of a study that examined NOAA’s climate stations.

“And with that large of a number, over 90 percent, the methods that NOAA employs to try to reduce this don’t work because the bias is so overwhelming,” Mr. Watts told The Epoch Times.

“The few stations that are left that are not biased because they are, for example, outside of town in a field and are an agricultural research station that’s been around for 100 years … their data gets completely swamped by the much larger set of biased data. There’s no way you can adjust that out.”

Meteorologist Roy Spencer agreed.

“The surface thermometer data still have spurious warming effects due to the urban heat island, which increases over time,” Mr. Spencer said.

He is the principal research scientist at the University of Alabama, the U.S. Science Team leader for the Advanced Microwave Scanning Radiometer on NASA’s Aqua satellite, and the recipient of NASA’s Exceptional Scientific Achievement Medal for his work with satellite-based temperature monitoring.

Mr. Spencer also said computerized climate models used to drive changes in energy policy are even more faulty.

Lt. Col. John Shewchuk, a certified consulting meteorologist, said the problems with temperature readings go beyond heat bias. The retired lieutenant colonel was an advanced weather officer in the Air Force.

“After seeing many reports about NOAA’s adjustments to the USHCN [U.S. Historical Climatology Network] temperature data, I decided to download and analyze the data myself,” Lt. Col. Shewchuk told The Epoch Times.

“I was able to confirm what others have found. It is obvious that, overall, the past temperatures were cooled while the present temperatures were warmed.”

He contends that NOAA and NASA have adjusted historical temperature data in such a way as to make the past appear colder and, by so doing, make the current warming trend more pronounced.

Faulty Temperature Readings

The urban heat island effect causes higher temperatures in areas where there are more buildings, roads, and other forms of infrastructure that absorb and then radiate the sun’s heat, according to the Environmental Protection Agency.

The agency estimates that “daytime temperatures in urban areas are 1–7 degrees Fahrenheit higher than temperatures in outlying areas, and nighttime temperatures are about 2–5 degrees Fahrenheit higher.”

Consequently, NOAA requires all its climate observation stations to be located at least 100 feet away from elements such as concrete, asphalt, and buildings.

However, in March 2009, Mr. Watts released a report that shows that 89 percent of NOAA’s stations had heat bias issues due to being located within 100 feet of those elements, and many were located by airport runways.

“We found stations located next to the exhaust fans of air conditioning units, surrounded by asphalt parking lots and roads, on blistering-hot rooftops, and near sidewalks and buildings that absorb and radiate heat,” Mr. Watts said.

“We found 68 stations located at wastewater treatment plants, where the process of waste digestion causes temperatures to be higher than in surrounding areas.”

The report concluded that the U.S. temperature record was unreliable, and because it was considered “the best in the world,” global temperature databases were also “compromised and unreliable.”

Following the report, the U.S. Office of Inspector General (OIG) and the Government Accountability Office confirmed Mr. Watt’s findings and stated that NOAA was taking steps to address the issues.

“NOAA acknowledges that there are problems with the USHCN data due to biases introduced by such means as undocumented site relocation, poor siting, or instrument changes,” the OIG report reads.

“All of the experts thought that an improved, modernized climate reporting system is necessary to eliminate the need for data adjustments.”

Despite the assurances, Mr. Watts had doubts about NOAA addressing the issues and in April 2022 and May 2022, he and his team revisited many of the same temperature stations they had observed in 2009.

He published his findings in a new study on July 27, 2022. It found that even more, approximately 96 percent, of NOAA’s temperature stations still failed to meet its own standards.

“There are two main biases in the surface temperature network for the United States, and most likely the world, that I have identified,” Mr. Watts said.

“The biggest bias is the urban heat island effect. What happens is that because heat is retained by the surfaces and released into the air at night, the night’s low temperature is not as low as it could be if the thermometer were outside of town and in a field.”

Over the years, he said, more and more infrastructure has been built up around the thermometer locations, and at night, the asphalt and concrete release the absorbed heat and push up the temperature.

“You can look at any set of climate data, no matter who produces it, and you can see this effect. The low temperatures are trending upward much faster, and the high temperatures are virtually unchanged. But it’s the average temperature that’s being used to track climate change,” Mr. Watts said.

He said that even though both NOAA and NASA claim that they can adjust their data to account for the urban heat island effect, the bias is impossible to overcome because the problem impacts 96 percent of surface stations.

He said the few thermometers located at climate stations not experiencing a heat bias show half the rate of warming currently being reported.

Transient Temperature

The second primary bias that Mr. Watts identified is the transient temperature readings, which are short-term temperature changes that can give a false reading.

NOAA started switching out their mercury thermometers in the mid-to-late 1980s, according to Mr. Watts.

The majority of its network now consists of electronic thermometers that can measure temperature within seconds.

“But they’re only recording the high and the low temperature of the day, and these can be biased by simple effects of wind,” he said.

“For example, you can have one of these temperature sensors placed near a parking lot, which happens to be to the east of the thermometer. And the wind has been predominantly from the south all through the day. But then, all of a sudden, you get a wind shift, and the wind shift could be caused by a number of different things. It could be caused by a change in the weather patterns. It could be caused by something blocking the wind from the south, like a semi-truck pulling up nearby.

“So you get wind shifting out of the east suddenly, coming across the parking lot, and picking up that radiant heat. And the thermometer will respond to that in the space of a second or two. And it will report a high temperature from that wind gust that does not necessarily represent the weather that day. It’s an anomaly. And the same thing can happen at night.”

Mr. Watts said transient temperature is such a well-known problem that the Met Office in the UK and the Australian Bureau of Meteorology have abandoned their high-tech network and are retooling to get more accurate readings.

“These are the problems that NOAA has not really fully addressed,” he said. “The folks who do the climate data never leave the office, and they don’t administer these stations. They [the stations] are left to the National Weather Service field offices—and the National Weather Service field offices are understaffed.

“Some stations, like out here in the West, are hundreds of miles away or more from the National Weather Service office, so they can’t get out there and do maintenance regularly. And when the National Weather Service went to modernization in the early 1990s, they closed many Weather Service offices around the country.

“And so, the maintenance on these thermometers—and a lot of these monitors are run by the public, a lot are volunteers—has fallen off. I’ve had volunteers, when I go visit, ask me if I can get the Weather Service to come out and fix something. But they can’t, because the problem is, they don’t have the budget.

“The bottom line is that the Cooperative Observer Network, the COOP network—it’s literally a ragtag bunch of volunteers combined with some public agencies, such as police stations, fire stations, forest service, and so on.

“This is not a rigorously scientifically controlled network at the operational level.”

NOAA itself stated on its website that its temperature readings aren’t precise and that the agency adds a margin of error to its temperatures.

Neither NOAA nor NASA responded by press time to The Epoch Times’ request for comment regarding transient temperature anomalies or Mr. Watts’s claim that adjusting for a heat bias is impossible.

Adjusting Temperature Readings

NOAA has also been adjusting historical temperature data.

“Normally, when correcting data errors, you would expect a more random result in the data adjustments—both up and down—but the results instead show a systematic process of cooling the past and warming the present,” Lt. Col. Shewchuk said.

An example is Iceland’s Reykjavik station.

The February 1936 record for the Reykjavik station showed a mean temperature of minus 0.2 degrees Celsius for the month and an annual mean temperature of 5.78 degrees Celsius, according to the Goddard Institute for Space Studies Surface Temperature Analysis (GISTEMP). The original GISTEMP monthly data was known as v2, or version 2.

In 2019, NOAA released an updated version of its software, GISTEMP v4.

It shows Reykjavik station’s mean temperature for February 1936 as minus 1.02 degrees Celsius, and the annual mean temperature as 5.01 degrees Celsius. That’s a downward adjustment of 0.82 degrees Celsius for the month and 0.77 degrees Celsius for the year after the software update.

When comparing the GISTEMP v2 monthly data against the v4 monthly data, an overall cooling of the past is observed.

“Incredibly, the range of data adjustments exceeds 2 degrees Fahrenheit, which is significant with respect to current temperature trends,” Lt. Col. Shewchuk said.

“NOAA also employs a very unusual follow-on data adjustment process, where they periodically go back and re-adjust the previously adjusted data. This makes it difficult to find ground truth, which seems more like shifting sands.”

In response to The Epoch Times’ request for comment about the adjustments to historical data, NOAA’s public affairs officer, John Bateman, said he reached out to one of NOAA’s National Centers for Environmental Information (NCEI) climate experts, who responded: “NCEI applies corrections to account for historical changes in station location, temperature instrumentation, observing practice, and, to a lesser extent, siting conditions. Our approaches are documented in the peer-reviewed literature. At the national scale, the corrected data are in good agreement with the U.S. Climate Reference Network (USCRN), which has pristine siting conditions.”

NASA didn’t respond to The Epoch Times’ request for comment about adjustments to historical data.

Satellite Readings

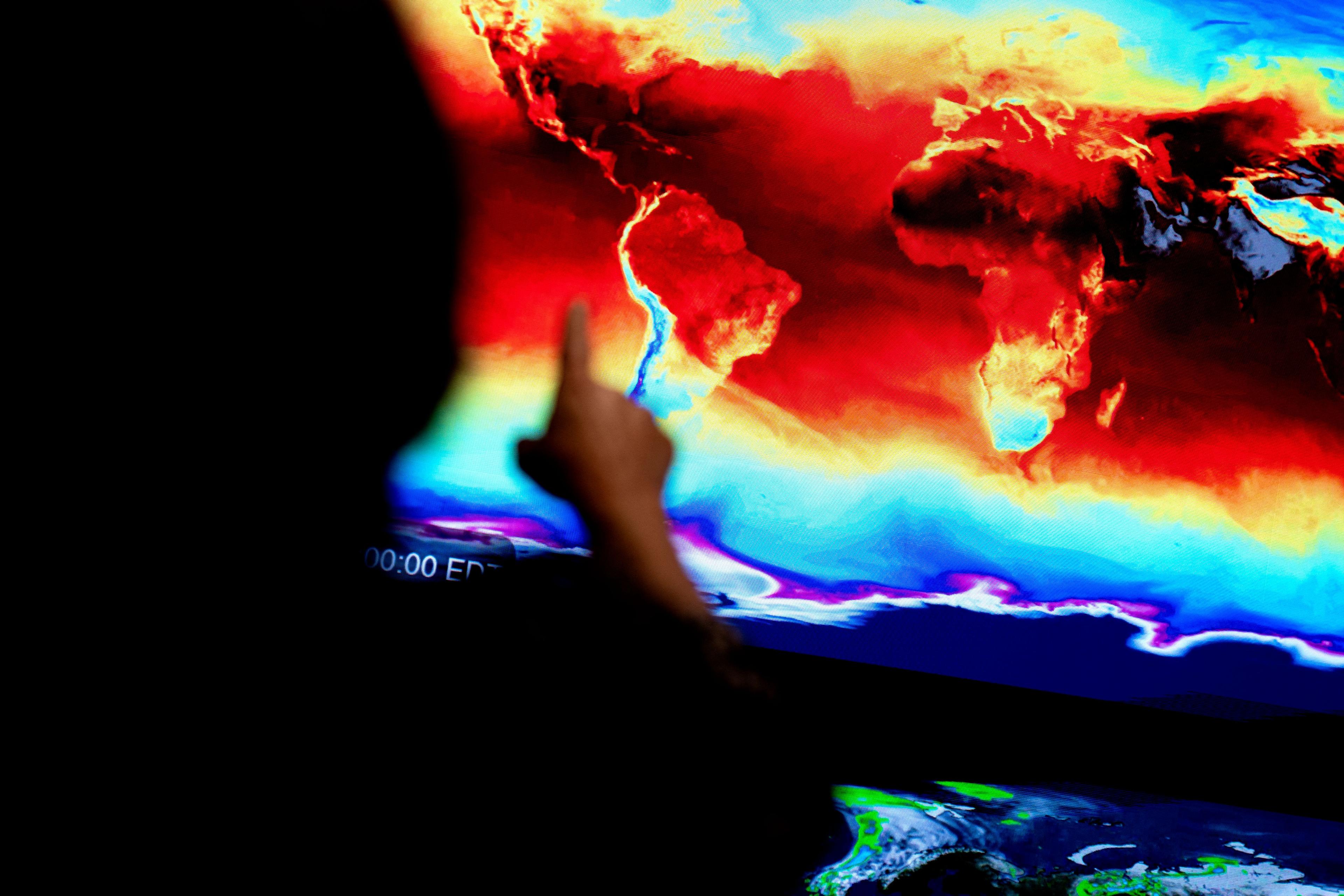

To get a more accurate reading of the Earth’s fluctuating surface temperatures, Mr. Spencer and climatologist John Christy developed a global temperature data set from microwave data observed from satellites.

Mr. Christy is a professor of atmospheric science at the University of Alabama in Huntsville and director of the Earth System Science Center, who, along with Mr. Spencer, received NASA’s Exceptional Scientific Achievement Medal for his work with satellite-based temperature monitoring.

They started their project in 1989 and analyzed data going back to 1979.

According to satellite data, since 1979, the Earth’s temperature has been increasing at a steady rate of 0.14 degrees Celsius every 10 years.

And while 2023 was the hottest year on record due to linear warming trends, they say it’s not a cause for public panic.

“Yes, it appears 2023 was the warmest in the last 100 years or so. But numbers matter. The magnitude isn’t large enough for anyone to feel,” Mr. Spencer said.

“Besides, a single year is weather, not climate. What matters is the long-term trend, say many decades.”

He said the 2023 data, added to the 45 years of data since 1979, doesn’t alter the overall trend of 0.14 degrees Celsius increase every 10 years

“I believe both satellites and thermometers show a warming trend, especially since the 1970s,” Mr. Spencer said.

“But the strength of that trend is considerably less than what climate models predict, and it is those models which are used to argue for changes in energy policy and CO2 emissions reduction.”

An employee gestures toward a global map showing information coming in from NASA satellites at an exhibit at NASA headquarters in Washington on June 21, 2023. (Stefani Reynolds/AFP via Getty Images)

Lt. Col. Shewchuk agreed that satellite-based temperature data is more precise, and it shows a much smaller warming trend than NOAA’s surface-based warming trend.

“The satellite data are a better measure of global temperature change because [they] do not suffer from conventional surface temperature station location problems or the numerous forms of NOAA data editing activities,” he said.

Satellite readings are also “routinely calibrated to radiosonde (weather balloon) data, which are the gold standard for atmospheric data.”

Mr. Spencer published a report on Jan. 24 that addresses inaccuracies in climate modeling.

“Warming of the global climate system over the past half-century has averaged 43 percent less than that produced by computerized climate models used to promote changes in energy policy,” the report reads.

“Contrary to media reports and environmental organizations’ press releases, global warming offers no justification for carbon-based regulation.”

Mr. Spencer said the public has been led to believe that modeling is “fairly accurate,” but a number of additional variables have been added to the modeling that result in higher temperature estimates.

“Current claims of a climate crisis are invariably the result of reliance on the models producing the most warming, not on actual observations of the climate system which reveal unremarkable changes over the past century or more,” he wrote.

NASA Props Up Ground Readings

NASA claims on its website that ground thermometers are more accurate than satellite measurements.

“While satellites provide valuable information about Earth’s temperature, ground thermometers are considered more reliable because they directly measure the temperature where people reside,” NASA stated.

“Satellite data require complex processing and modeling to convert brightness measurements into temperature readings, making ground thermometers a more direct and accurate source of temperature information for us.”

Mr. Spencer quickly pointed out the flaws in NASA’s claim.

“Surface thermometers only cover a tiny fraction of the Earth, whereas the satellites provide nearly complete global coverage,” he said.

“NASA’s complaint that the 16 separate satellites must be pieced together ‘like a jigsaw puzzle’ is ironic since the surface temperature record is pieced together from hundreds (if not thousands) of stations, with almost none of them, anywhere, providing a continuous, uninterrupted record unaffected by increasing urban heat island effects.

“Finally, the complaint is that satellites only measure the deep atmosphere, not the surface where people live. … Well, if that is so, why are deep ocean temperatures touted as being so valuable for climate research? All of these measurements are important in their own right, and each system has its strengths and weaknesses. Our satellite dataset is widely used by climate researchers around the world.”

As to NASA’s critique that satellites don’t directly measure temperature but instead the brightness of Earth’s atmosphere, making them inaccurate, Mr. Spencer said: “Strictly speaking, that is true. But surface thermometers are electronic, so (technically) they measure electrical resistance.

“The satellites are calibrated with the highest quality, laboratory-standard platinum resistance thermometers. If NASA is going to fault remotely-sensed satellite data, they might as well shut down their myriad Earth satellite programs, which have the same (supposed) ‘defect.’”

Lt. Col. Shewchuk called NASA’s claim that satellite data is inferior to surface temperature readings “nonsense.”

“UAH satellite data is the only data source that is truly global in nature. It effectively measures the temperature of earth’s entire atmosphere, and especially the lower troposphere—where our weather is actually created,” he said.

“The only limitation is that the satellite data only begins in 1979.”

Mr. Watts said that when he looked at data from ground surface stations in grassy fields (absent an urban heat island effect), the temperature readings closely matched Mr. Spencer’s satellite data.

When asked why NOAA isn’t only using thermometers where there’s no possibility for an urban heat island effect, Mr. Spencer said: “I think their goal is not to get the most accurate long-term temperature record but to use as much thermometer data as they can get their hands on. This is good to build a congressionally-funded program and keep people employed.”

The current amount of money, $1.3 trillion annually, being spent on climate initiatives is nowhere near enough, according to the Climate Policy Initiative.

READ MORE FROM FOR A FREE AMERICA

“In the average scenario, the annual climate finance needed through 2030 increases steadily from $8.1 to $9 trillion. Then, estimated needs jump to over $10 trillion each year from 2031 to 2050,” the group stated.

“This means that climate finance must increase by at least five-fold annually, as quickly as possible, to avoid the worst impacts of climate change.”

The organization lists its funders on its website, including the Rockefeller Foundation, WWF, and Bloomberg Philanthropies. Its partners include BlackRock, two U.N. climate groups, several large global banks, and government groups such as the Global Covenant of Mayors for Climate and Energy.

Editor’s Note: This article has been updated to include a comment from NOAA.

Trillions spent and what do we have to show for it? Some wind turbines and some solar panels. Which have been shown, that they can not keep up with the demand at critical times. Follow the money and you’ll find that there are a few making billions off of this scam.